UPenn ENGR 1050 · December 2024

Cat/Dog Image Classifier

A 4-layer convolutional neural network in PyTorch, trained on 24,000 images to classify cats vs. dogs. Built as the final project for an intro engineering course that hadn't covered anything beyond a single-layer perceptron, so most of the work was teaching myself how CNNs actually function.

Why This Project?

This was the final project for an introductory engineering course where students could build anything related to class material. The most AI they'd covered was a single-layer perceptron. I wanted to go beyond, so I self-taught convolutional neural networks and built this classifier from scratch. I consulted resources to understand how CNNs work, particularly how they consider the spatial relationships between pixels, not just their individual values, making them far better suited for image processing than a simple perceptron.

Architecture

The CNN has 4 convolutional layers with increasing filter counts (64 → 128 → 256 → 512), each followed by BatchNorm, ReLU activation, and MaxPool. After flattening, three fully connected layers (1024 → 256 → 1) produce a binary classification with BCEWithLogitsLoss. Dropout (0.5) after the first FC layer helps prevent overfitting. Training used Adam optimizer (lr=0.0001) with a StepLR scheduler (step=10, gamma=0.1).

Conv2d(3→64) → BN → ReLU → Pool

Conv2d(64→128) → BN → ReLU → Pool

Conv2d(128→256) → BN → ReLU → Pool

Conv2d(256→512) → BN → ReLU → Pool

Flatten → FC(32768→1024) → Dropout(0.5)

FC(1024→256) → FC(256→1) → SigmoidInput

128×128 RGB

Dataset

24,000 images

Split

80% train, 20% val

Epochs

150

Peak Accuracy

97.8%

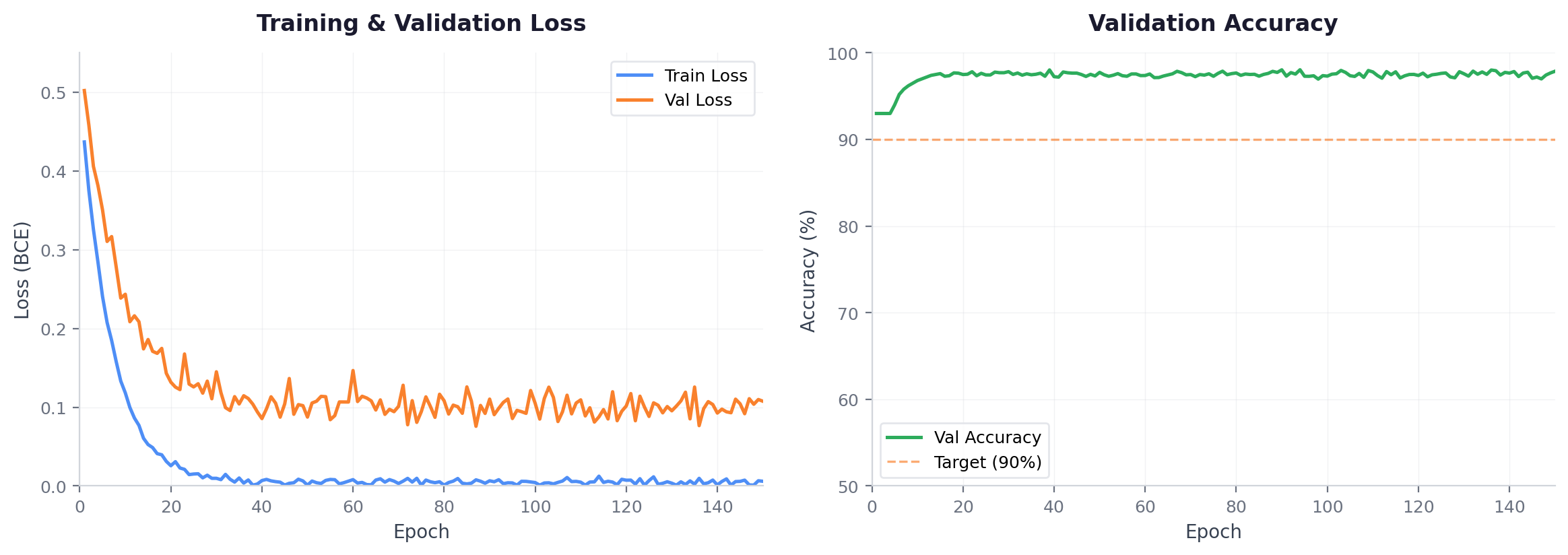

Training Results

I initially planned around 50 epochs but decided to push it to 150 to see what would happen. The model converged rapidly. By epoch 15, validation accuracy was already above 97%. The remaining 135 epochs showed essentially no improvement, with train loss near zero and validation loss plateaued around 0.12, showing mild overfitting but excellent generalization.

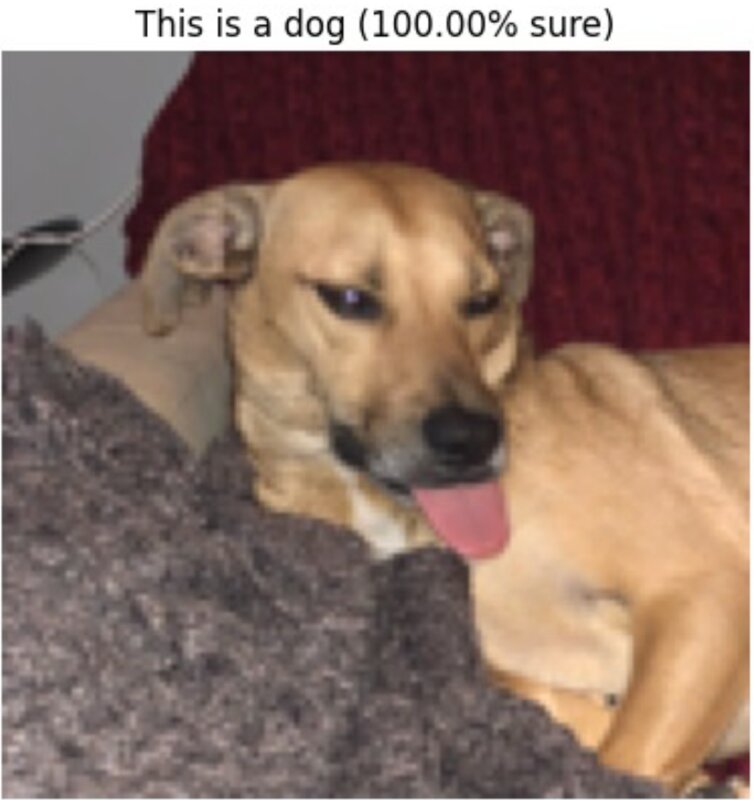

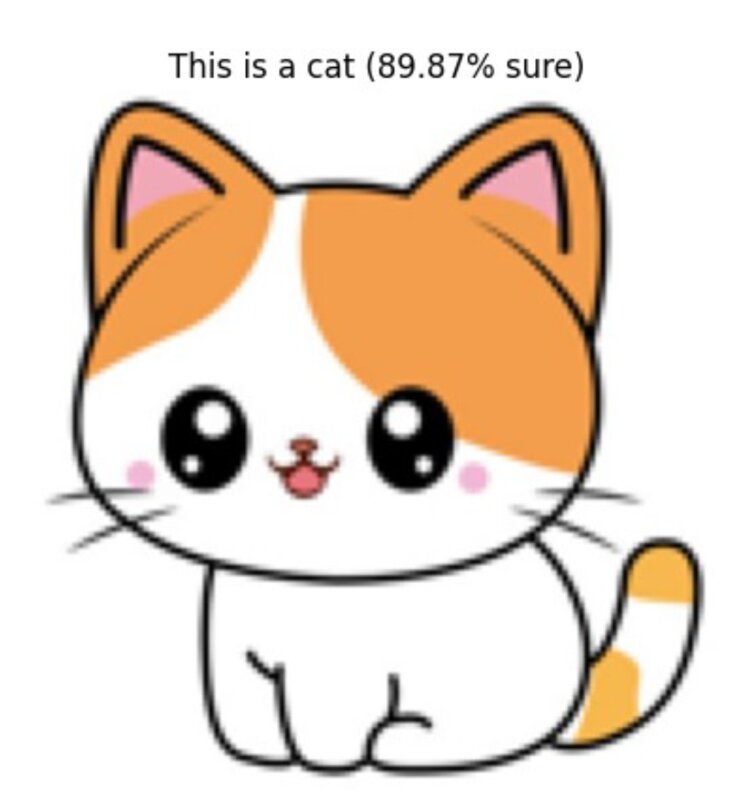

Real-World Testing

I tested the model on personal pet photos and internet images. When confident, it was very confident (100% on clear dog/cat photos). On ambiguous or out-of-distribution images like cartoon cats or meme images, the confidence dropped appropriately, showing the model had learned meaningful features rather than just memorizing the training set.

This first exposure to machine learning helped me realize how much of a black box AI is, but conversely, how engineerable it is. The project showed that with the right architecture, data pipeline, and training setup, you can build something that genuinely works without fully understanding every mathematical detail of why it works. That tension between black-box behavior and practical engineering is what made ML click for me.